I connect OpenAI, Claude, Gemini, or local open-source models to your app, scraper, or database. LLM pipelines, AI content classifiers, local model setup with Ollama — starting at €100. If you have data that needs to be processed, sorted, classified, or enriched with AI, contact me and I'll tell you what it takes.

Quick Answer

- Starting price: €100 for a basic LLM pipeline

- Full scraper + AI + database pipeline: €200–300

- Delivery: 3–7 days depending on complexity

- Supported models: GPT-4o, Claude 3.5, Gemini, Llama, Mistral, Ollama

- Tech stack: Python, REST APIs, PostgreSQL, Docker

What I Build

- LLM pipeline setup — connect OpenAI, Claude, or open-source models to your data

- AI content classifiers — tag, sort, or categorize data automatically with an LLM

- Scraper + LLM + database pipelines — scrape, process with AI, insert clean results

- Local AI model setup — install and run Ollama or LM Studio on your server

- Prompt engineering — design reliable prompts that give consistent structured output

- RAG systems — connect a model to your documents or knowledge base

- Chatbot integration — embed an AI assistant into your site or Telegram bot; see my Telegram bot development guide if you need the bot built too

Real Project: Scraper + LLM + Database Pipeline

A client needed to collect product listings from multiple e-commerce sites, extract structured attributes (category, material, size range, target audience), and insert clean records into a PostgreSQL database for their inventory system. Manual tagging was taking 8 hours per day.

The solution was a three-stage Python pipeline:

- Scraper stage — Python scraper collects raw product data (title, description, images) from target sites on a daily schedule

- LLM processing stage — each product description is sent to GPT-4o-mini with a structured prompt that extracts specific attributes and returns clean JSON

- Database stage — parsed JSON is validated and inserted into PostgreSQL with error logging for any records that fail validation

Result: 8 hours of manual work reduced to zero. 500+ products processed automatically every night. The client reviews the database directly instead of tagging raw data.

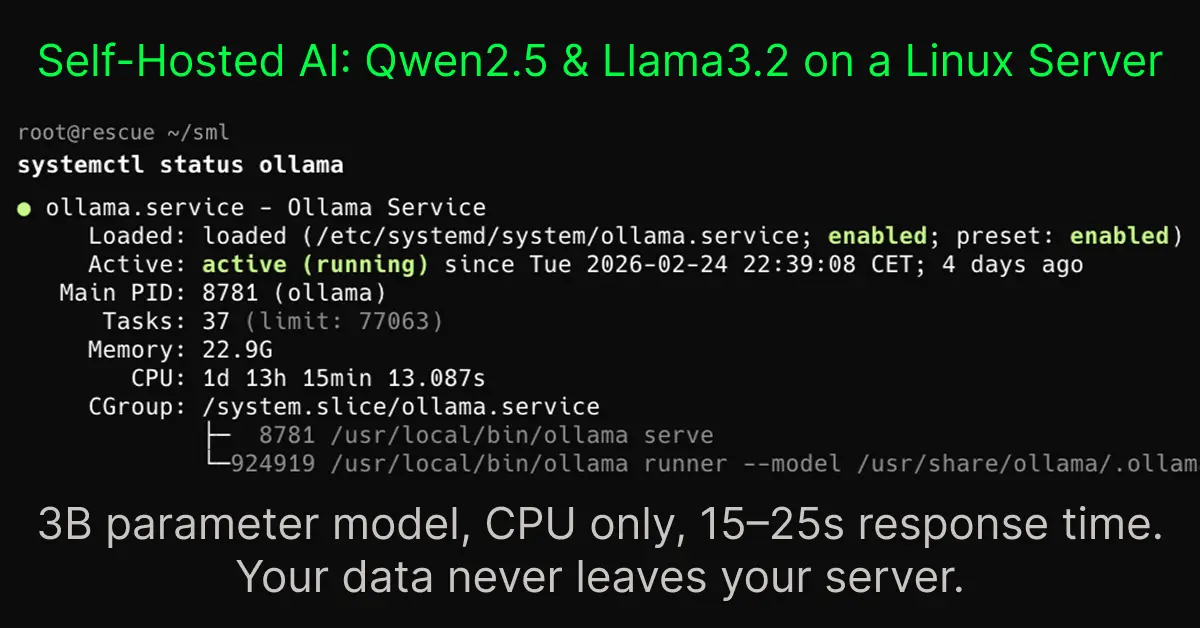

Local AI Model on a Private Server

For another project involving sensitive internal documents, the client needed AI processing without sending data to external APIs. I set up Ollama with a Llama 3 model on their Ubuntu VPS, configured GPU acceleration with CUDA, and built a Python wrapper that their team could call via a simple REST endpoint. All processing stayed on their infrastructure. If you need a reliable web server to host this kind of setup, see my Nginx configuration guide.

Cloud AI vs Local Models: Which to Choose

| Factor | Cloud API (OpenAI, Claude) | Local Model (Ollama, LM Studio) |

|---|---|---|

| Cost per request | Pay per token, scales with volume | Free after setup, fixed server cost |

| Data privacy | Data sent to external servers | Stays on your infrastructure |

| Model quality | Best available (GPT-4o, Claude 3.5) | Good, improving rapidly (Llama 3, Mistral) |

| Setup complexity | Simple API key integration | Requires server setup, GPU optional |

| Best for | Most business use cases, best results | High volume, sensitive data, offline needs |

For most projects I recommend starting with a cloud API. It is faster to deploy, gives better results, and the cost is lower than the development time needed to tune a local model. Local models make sense for high-volume processing or when data privacy is a hard requirement.

What You Get

- Full Python source code: Clean, documented code you own completely

- Prompt engineering: Prompts tested for consistent, structured output — not just first-pass attempts

- Error handling and fallbacks: Pipeline recovers from API failures, rate limits, and malformed responses

- Cost estimate: I calculate expected monthly API costs before you commit

- Deployment: Pipeline deployed and running on your server or cloud environment

- 7 days of post-delivery support: Bug fixes and prompt adjustments after delivery at no extra cost

How It Works

- Contact me — describe what data you have, what you want AI to do with it, and what the output should look like.

- I design the pipeline — I confirm the approach, model choice, expected costs, and fixed project price within 24 hours.

- I build and test — pipeline built, prompts tuned, output validated on real data.

- Delivery and deployment — running on your server, you verify output quality, I adjust anything needed.

- Handoff — source code, documentation, and 7 days of support included.

AI & LLM Integration Pricing

Fixed price per project. Agreed before work starts. API usage costs (OpenAI, Anthropic) are separate and paid directly by you to the provider.

| Service | What It Includes | Price | Delivery |

|---|---|---|---|

| LLM Pipeline Setup | Connect OpenAI, Claude, or Gemini to your data or workflow | from €100 | 2–3 days |

| AI Content Classifier | Data in, tagged and sorted by LLM, structured output | from €120 | 3–4 days |

| Local AI Model Setup | Ollama + open-source model on your Linux server, GPU optional | from €150 | 2–4 days |

| Chatbot Integration | AI assistant embedded in your site or Telegram bot | from €150 | 3–5 days |

| Scraper + LLM + Database | Full pipeline: scrape, process with AI, insert clean results to DB | from €200 | 5–7 days |

| RAG System | Connect model to your documents or knowledge base | from €250 | 5–7 days |

API costs: OpenAI, Anthropic, and Google API usage is billed directly to your account. I calculate the expected monthly cost as part of the project scoping so you know before committing.

Frequently Asked Questions

- How much does AI integration cost for a small business?

- A basic LLM pipeline connecting OpenAI or Claude to your existing app or data starts at €100. A full scraper + LLM processing + database pipeline costs €200–300. Price depends on complexity and the number of integrations involved.

- How do I connect OpenAI to my website?

- You connect OpenAI via their REST API using Python. You send your data as a prompt, receive the model's response, and use it in your workflow. I handle the API setup, prompt engineering, response parsing, and wiring into your existing system.

- What is an LLM pipeline?

- An LLM pipeline is a sequence of steps where data is collected, sent to a language model for processing, and the result is used or stored. Example: scrape product descriptions, send them to GPT-4o for category classification, insert classified results into a database automatically.

- Can I run an AI model locally on my server?

- Yes. Ollama lets you run open-source models like Llama 3, Mistral, or Gemma locally without sending data to external APIs. I can install and configure local models on your Linux server, including GPU acceleration with CUDA if your server supports it.

- Which AI model should I use for my project?

- For most business tasks, GPT-4o or Claude 3.5 Sonnet via API give the best results. For cost-sensitive high-volume tasks, GPT-4o-mini or local models via Ollama are better. I recommend the right model after understanding your specific use case and budget.